Anecdotes often get a bad rap – sometimes deservedly. We have all seen examples of narratives plucked from the public smorgasbord and used to prop up a pre-conceived ideology. Given the prevalence of this often irresponsible and manipulative use of narrative [discussed further in the Huffington Post’s “The Allure of an Anecdote”] it is easy to lose faith in the power of stories. This periodically leads to a surge in demand for hard data and evidence regarding everything from healthcare to higher education. But data and statistics take their fair share of heat as well. For one thing, it turns out that data analysis is subjective too. Data can be manipulated, massaged, and infused with bias. And the strictly ‘objective’ quantitative analysis tends to come across as cold, devoid of feeling, and uninteresting. We know enough to know that numbers never tell the whole story. Standardized testing alone is a grossly inadequate assessment of educational enrichment and when organizations uncompromisingly focus on ‘the bottom line,’ it makes most of us uncomfortable at best.

This methodological tension is an exemplar of how the solution is rarely to be found in the extremes. Unfortunately, these two approaches to knowing the world have such strong advocates and detractors that we are often drawn toward diametrically opposed camps along a false continuum. Compounding the problem is that shoddy and irresponsible research at both ends of the spectrum is regularly circulated in mainstream media outlets.

This divorce is particularly problematic given that quality science, good journalism, and effective research tend to integrate the two. So-called “hard data and evidence” need narrative and story to provide validity, context and vitality. On the other hand, anecdotes and narratives need “hard data and evidence” to provide reliability, and to help separate the substantive from the whimsical. In responsible and effective research and analysis, the methodological dichotomy is brought into synergy, working together as structure and foundation, flesh and bone. The Philadelphia Inquirer printed a series on poverty in 2010 that serves as a good example from the field of journalism [“A Portrait of Hunger”]. Done effectively, data and narrative are inextricably melded into a seamless new creation.

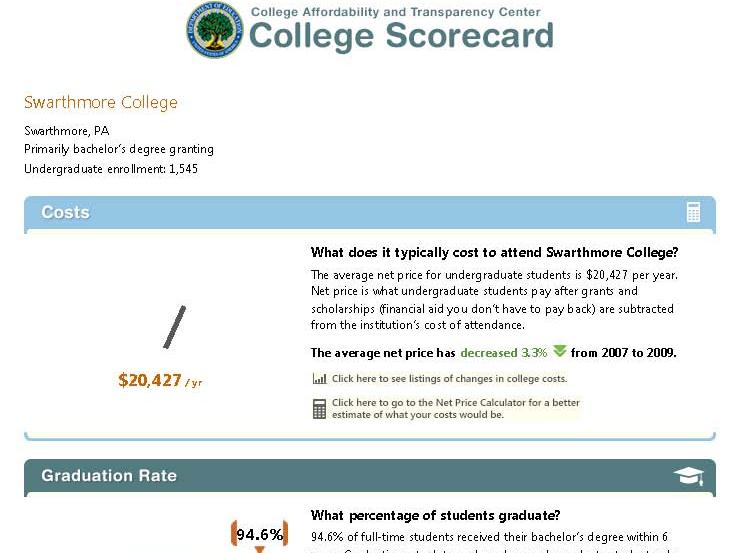

In my short time thus far in Institutional Research at Swarthmore, I have been impressed by many things, one of which is the simultaneous respect for research and evidence-based decision making alongside respect for stories, nuance, and humanity. When the values and mission of a college call for an environment that respects both, it facilitates the practice of effective and balanced institutional research.