There may be some situations where you would want to delete select records from an individual return file. For example, you may have a project where you are looking at student enrollment after graduation or transfer, and it is decided that your particular project will only include records for which a student was enrolled for more than 30 days in a fall/spring term or more than 10 days in a summer term. Or, you may have six years of records for a particular cohort, but you only want to examine records for four years. In both of these cases, you would want to delete the records that don’t fit your criteria before analyzing your data.

Category: Data and Reporting

Video and SPSS Syntax: Admit/Not Enroll Project Using the National Student Clearinghouse Individual Detail Return File

I use the National Student Clearinghouse individual detail return file and SPSS syntax in this video to capture the first school attended for students who were admitted to my institution, but who did not enroll (names listed are not real applicants). In a future video, I’ll work on the same project using the aggregate report. I almost always use the individual detail return file since it provides so much information, but it does have a limitation that impacts this project.

Decisions, Decisions

I’ve been working with data from the National Student Clearinghouse (NSC) for a while now. A lot of wonderful information can be found in the NSC data, but the detailed return file can sometimes be a bit difficult. There are so many ways the data can be sliced, and it can sometimes be challenging to determine how best to work with the data to present meaningful information to stakeholders.

Anecdotes versus Data: A False Dichotomy

Anecdotes often get a bad rap – sometimes deservedly. We have all seen examples of narratives plucked from the public smorgasbord and used to prop up a pre-conceived ideology. Given the prevalence of this often irresponsible and manipulative use of narrative [discussed further in the Huffington Post’s “The Allure of an Anecdote”] it is easy to lose faith in the power of stories. This periodically leads to a surge in demand for hard data and evidence regarding everything from healthcare to higher education. But data and statistics take their fair share of heat as well. For one thing, it turns out that data analysis is subjective too. Data can be manipulated, massaged, and infused with bias. And the strictly ‘objective’ quantitative analysis tends to come across as cold, devoid of feeling, and uninteresting. We know enough to know that numbers never tell the whole story. Standardized testing alone is a grossly inadequate assessment of educational enrichment and when organizations uncompromisingly focus on ‘the bottom line,’ it makes most of us uncomfortable at best.

This methodological tension is an exemplar of how the solution is rarely to be found in the extremes. Unfortunately, these two approaches to knowing the world have such strong advocates and detractors that we are often drawn toward diametrically opposed camps along a false continuum. Compounding the problem is that shoddy and irresponsible research at both ends of the spectrum is regularly circulated in mainstream media outlets.

This divorce is particularly problematic given that quality science, good journalism, and effective research tend to integrate the two. So-called “hard data and evidence” need narrative and story to provide validity, context and vitality. On the other hand, anecdotes and narratives need “hard data and evidence” to provide reliability, and to help separate the substantive from the whimsical. In responsible and effective research and analysis, the methodological dichotomy is brought into synergy, working together as structure and foundation, flesh and bone. The Philadelphia Inquirer printed a series on poverty in 2010 that serves as a good example from the field of journalism [“A Portrait of Hunger”]. Done effectively, data and narrative are inextricably melded into a seamless new creation.

In my short time thus far in Institutional Research at Swarthmore, I have been impressed by many things, one of which is the simultaneous respect for research and evidence-based decision making alongside respect for stories, nuance, and humanity. When the values and mission of a college call for an environment that respects both, it facilitates the practice of effective and balanced institutional research.

Data Disconnect

Sadly, Thursday’s “Headcount” blog of the Chronicle reports on another institution misrepresenting data that is used in the US News rankings. While there is plenty in the topic to be upset about, I found myself annoyed by this statement:

Nevertheless, replacing hard-and-fast numbers with mere estimates involves a conscious choice, and, it’s fair to assume, an intent to polish the truth.

Certainly there are situations when the intent is to “polish the truth,” and I have no idea whether this was the case at this institution, but I actually think it’s UNfair to assume the intent. Continue reading Data Disconnect

Numbers, numbers…

Most IR people are fascinated with numbers, logic, probability, and statistics, which is part of what draws us to our field. We like to poke at data, and think about what they can and cannot tell us about the phenomena they reflect. It’s not surprising that many in the profession think that Nate Silver is somewhat of a god. And so when one of our favorite numbers guru addresses a topic in higher education, our day is made!

Yesterday in his blog, Five Thirty Eight, Nate Silver talked about what the changing numbers of college majors do and do not tell us about college programs and whether or not some majors are suffering from an increased emphasis on career-focused programs. He uses data from the National Center for Education Statistics – data provided by Institutional Researchers through our IPEDS reporting – and employs a standard IR approach in offering alternative perspectives on numbers: using a different denominator. No spoilers here, I couldn’t possibly do it justice anyway. Please go read.

As an added bonus, Silver mentions his own undergraduate experience at the University of Chicago and advocates broad, diverse studies. He didn’t explicitly mention “liberal arts education,” but at least a few of his readers’ comments do. Oh well, you can’t have everything!

Why IR is hiring

With an increasing amount of my time for the past two years spent with the Provost’s Office and the College in general helping to guide our assessment efforts, the IR Office has been struggling mightily to keep up with our work. In January we were approved for an additional limited term position in the IR office to help offset the loss of my time. The need for the position will be reevaluated in three years, which corresponds to the term of the new (second) Associate Provost position. This is not an accident. Since both positions are intended to relieve overloads caused, at least in part, by our needs for assessment and institutional effectiveness leadership, it makes sense to review this new infrastructure a few years down the road to see how it is serving us, as our assessment processes improve and our work becomes more routine.

With an increasing amount of my time for the past two years spent with the Provost’s Office and the College in general helping to guide our assessment efforts, the IR Office has been struggling mightily to keep up with our work. In January we were approved for an additional limited term position in the IR office to help offset the loss of my time. The need for the position will be reevaluated in three years, which corresponds to the term of the new (second) Associate Provost position. This is not an accident. Since both positions are intended to relieve overloads caused, at least in part, by our needs for assessment and institutional effectiveness leadership, it makes sense to review this new infrastructure a few years down the road to see how it is serving us, as our assessment processes improve and our work becomes more routine.

With this additional IR position and Alex’s departure, I’ll be in a way replacing both Alex and myself, as I continue focusing more on assessment and on our upcoming accreditation mid-term report. But while Alex and I shared much of the responsibilities for IR reporting and research in the past, I’ll be structuring the two positions to more separately reflect these two key roles. A Data and Reporting Officer will have primary responsibility for data management, and routine and ad hoc reporting for internal and external purposes. An Institutional Research Associate (the limited term position) will focus more on special studies, and is expected to provide the advanced analytic skills to our projects. These two positions, and mine, will share somewhat in responsibilities and have just enough overlap to serve us in those many “all hands” moments. It should be an exciting time for Institutional Research – and for assessment!

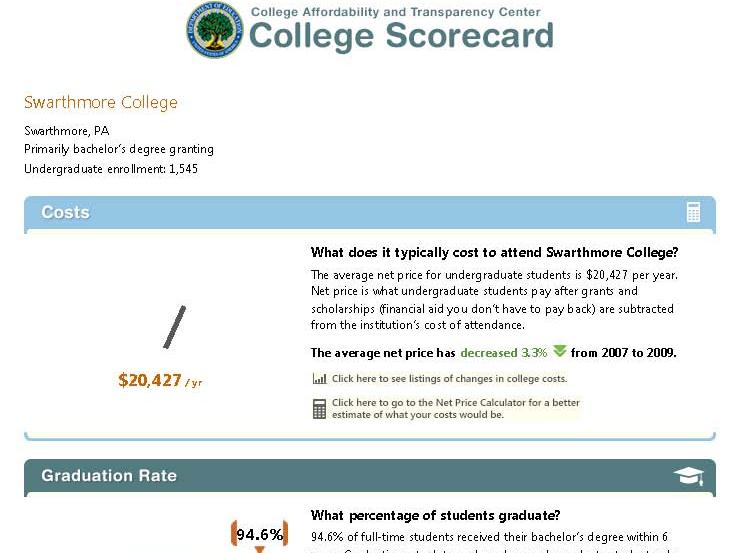

Keeping Score

President Obama announced the new “College Scorecard” in his state of the union address, and the interactive online tool was released the next day. The intended purpose of the tool is to provide useful information to families about affordability and student success at individual colleges. Since then, the IR community has been buzzing. Much of the data in the tool is reported via the IR offices, and many of us are already being asked to explain the data and the way it is presented. Several of our listservs became quite busy as my colleagues compared notes on glitches in the lookup feature of the tool (zip codes searches were problematic early on) and the accuracy of the data, and debated the clarity of the labels and the wisdom of the simple presentation.

President Obama announced the new “College Scorecard” in his state of the union address, and the interactive online tool was released the next day. The intended purpose of the tool is to provide useful information to families about affordability and student success at individual colleges. Since then, the IR community has been buzzing. Much of the data in the tool is reported via the IR offices, and many of us are already being asked to explain the data and the way it is presented. Several of our listservs became quite busy as my colleagues compared notes on glitches in the lookup feature of the tool (zip codes searches were problematic early on) and the accuracy of the data, and debated the clarity of the labels and the wisdom of the simple presentation.

This project is an example of a wonderful goal that is incredibly hard to execute well. Seeing all the press coverage (both mainstream and higher ed press) and hearing from my colleagues, I think about the balance of such a project. It seems reasonable that after thorough development and testing, there would be a point at which the best course of action is to just move forward and release it even though it is not perfect. But where is that point? One could argue whether this was the correct point for the Scorecard project, but all of the attention is creating increased awareness by the public, as well as pressures on the designers for improvement, and on colleges for accuracy and accountability.

I wonder how many people remember the clunky online tool, COOL (the College Opportunities On Line), from the early 00’s, and the growing pains that it went through as it evolved into the College Navigator, a pretty spiffy – and very useful – tool for families to find a wealth of information about colleges? These things evolve and if not useful and effective, won’t survive. The trick is not doing more harm than good while the kinks are worked out.

What’s in the Scorecard and where did it come from? The Scorecard has six categories of information: Undergraduate Enrollment, Costs, Graduation Rates, Loan Default Rate, Median Borrowing, and Employment. Information about the data and its sources can be found at the Scorecard website, but it takes a little work! Click on the far right square that says “About the Scorecard” on the middle row of squares. From the text that spins up, click “Here”, which opens another window (not sure if these are “pop-ups” or “floating frames”), and that’s where the descriptions are.

The data for the first three items come from our reporting to the federal government through the IPEDS (Integrated Postsecondary Education Data System), which I have posted about before. Here is yet another reason to make sure we report accurately! The next two categories, Loan Default Rate and Median Borrowing, get their data from federal reporting through the National Student Loan Data System (NSLDS). The last item, Employment, provides no actual data, but rather a sly nudge for users of the system to contact the institutions directly.

While each of these measures creates its own challenge to simplicity and clarity of explanation, one of the more confusing, and hence controversial, measures is the “Cost.” The display says “Net price is what undergraduate students pay after grants and scholarships (financial aid you don’t have to pay back) are subtracted from the institution’s cost of attendance.” This is an important concept, and we all want students to understand why they should not just look at the “sticker price” of a college, but at what students actually pay after accounting for aid. Some very expensive private colleges can actually cost less than public institutions once aid is factored in, and this is a very difficult message to get out! But the more precise definition behind the scenes (that floating frame!) says “the average yearly price actually charged to first-time, full-time undergraduate students receiving student aid at an institution of higher education after deducting such aid.” The first point of confusion is that this net price is calculated only for first-time, full-time, aided students, rather than averaged across all students. The second is the actual formula, which takes some more digging. It uses the “cost of attendance,” which is tuition, fees, room, and board, PLUS a standard estimate of the cost for books, supplies, and other expenses. The aid dollars include Pell grants, other federal grants, state or local government grants (including tuition waivers), and institutional grants (scholarship aid that is not repaid). And the third point that may cause confusion is, of course, the final, single figure itself which is an average, while no one is average.

Will a family dig that deep? Would they understand the terminology and nuances if they did? Would they be able to guess whether their student would be an aid recipient, and if so, whether they’d be like the average aid recipient? The net price presentation that already exists in the College Navigator has an advantage over the single figure shown in the Scorecard, because it shows the value for each of a number of income ranges. While aid determinations are based on much more than simple income, at least this presentation more clearly demonstrates that the net price for individuals varies – by a lot!

“Optimal” Faculty to Staff Ratio

An article in the Chronicle today reports on a study by two economists about the optimal faculty to staff ratio. The study is focused on Research 1 and 2 public institutions, but I couldn’t stop myself from applying the simple math formula to a small liberal arts college, such as Swarthmore, to see what would happen.

We are actually freezing our employee data today, and so I don’t yet have current numbers, but based on last year’s data we had 944 employees – 699 full-time. The study identifies the optimal ratio as 3 tenure-tack faculty to each full-time professional administrator. Using IPEDS reporting definitions, we had 162 tenured and on-track faculty members last year, and 242 full-time professional administrators (Executive/ Administrative/ Managerial, and Other Professional). That’s a conservative estimate of “professional administrators,” because it’s unclear to me from the paper which categories are included in the final equation. All non-faculty staff are considered at different points in their modeling.

So if that 3 to 1 ratio were desirable here, we would need to add 564 tenure-track faculty. I don’t know how the 242 administrators would manage all the new buildings and infrastructure we’d need. And our student to faculty ratio would drop to about 2:1. Alternately, we could get rid of about 188 professional administrators to drop their total to 54. In that case our 162 faculty would have to start managing housing, administering grants, raising funds, supporting IT, doing IPEDS reporting, etc., in addition to all their regular responsibilities. I’m sure they’d enjoy that.

Guess I’ll just have to wait until these researchers tackle this issue for liberal arts colleges.

The Importance of IPEDS

The IR responsibility of providing summary data to the federal government through the Integrated Postsecondary Education Data System (IPEDS) sounds like as much fun as completing tax forms. And as a matter of fact that analogy pretty much captures it! It’s an obligation of all institutions that participate in any kind of Title IV funding programs (federal student financial aid), which means that like death and taxes, it affects just about all of us. Assembling and providing this information is not always easy, but it’s a responsibility that we take very seriously, and we do our best to work effectively with our colleagues internally so that we provide the most accurate data possible.

The IR responsibility of providing summary data to the federal government through the Integrated Postsecondary Education Data System (IPEDS) sounds like as much fun as completing tax forms. And as a matter of fact that analogy pretty much captures it! It’s an obligation of all institutions that participate in any kind of Title IV funding programs (federal student financial aid), which means that like death and taxes, it affects just about all of us. Assembling and providing this information is not always easy, but it’s a responsibility that we take very seriously, and we do our best to work effectively with our colleagues internally so that we provide the most accurate data possible.

I recently attended a workshop to become a “trainer” for IPEDS. The Association for Institutional Research (AIR) works with the National Center for Educational Statistics (NCES) to provide training and support for both submitting data and using the data that NCES makes available to the public. It’s a really wonderful program of online tutorials, face-to-face workshops, and other activities that promote understanding of this important resource, and I’m excited about being involved. I’ve always been a girl scout about this stuff anyway, but the workshop reinforced just how valuable and PUBLIC! a resource this is. Once submitted (and after the agency’s review and consistency checking) this information becomes available to the public through the IPEDS Data Center. That means that anyone can use it …and they do! Policy analysts, legislators, reporters, grant agencies, prospective students, administrators at peer institutions, accreditors, job-seekers, higher education researchers, the list is endless. The accuracy of data can reflect on individual institutions – you really don’t want to show up on the U.S. Department of Education’s list of institutions with the fastest increasing tuition because you couldn’t be bothered to double-check your numbers – but it also has implications for policy, research conclusions, and many other decisions that affect the higher education community.